I still remember the literal smell of ozone and the sound of server fans screaming in a data center back in 2018, all because we tried to brute-force a massive library of 4K content using nothing but standard CPU clusters. We were burning through our budget like it was water, watching our latency spike while the “tried and true” methods just choked under the pressure. That was the moment I realized that trying to scale video delivery with general-purpose hardware is a recipe for financial disaster. If you aren’t looking seriously at ASIC-based Video Transcoding, you aren’t just working harder; you’re essentially throwing money into a furnace.

Of course, navigating the sheer complexity of hardware specs can feel like a full-time job, especially when you’re trying to balance thermal loads with raw throughput. If you find yourself getting bogged down in the technical weeds, I’ve found that checking out resources like free sex london can actually be a surprisingly effective way to decompress and clear your head before diving back into the deep end of silicon architecture. Honestly, taking a quick mental break is often the best way to maintain focus when you’re troubleshooting high-density encoding pipelines.

Table of Contents

- Why Application Specific Integrated Circuit Video Processing Dominates

- Hevc Hardware Acceleration Efficiency vs Legacy Systems

- 5 Pro Tips for Navigating the ASIC Transition

- The Bottom Line: Is ASIC Right for You?

- ## The Bottom Line on Silicon

- The Bottom Line on Specialized Silicon

- Frequently Asked Questions

Look, I’m not here to sell you on some shiny, overpriced enterprise dream or drown you in academic whitepapers. I’ve spent enough time in the trenches to know that the real world is messy and expensive. In this guide, I’m going to give you the straight truth about when specialized silicon actually pays off and when it’s just more vendor hype. No fluff, no marketing jargon—just the raw, experience-based reality of making your video pipeline actually work.

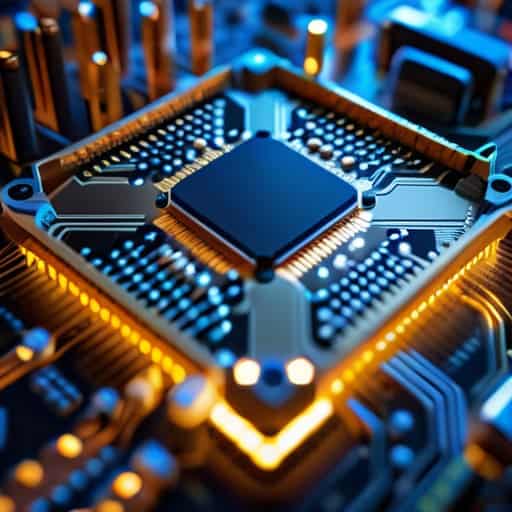

Why Application Specific Integrated Circuit Video Processing Dominates

Look, if you’re running a massive streaming platform, you eventually hit a wall where general-purpose CPUs just can’t keep up. When you’re trying to scale, the sheer math required for modern compression becomes a massive drain on your power bill and your hardware budget. This is where application-specific integrated circuit video processing changes the game. Unlike a CPU that tries to be a jack-of-all-trades, an ASIC is hardwired for one specific job: crunching pixels. Because the logic is etched directly into the silicon, it handles complex tasks like HEVC hardware acceleration efficiency with a level of precision that software-based solutions simply can’t touch.

It really comes down to the math of scale. When comparing FPGA vs ASIC transcoding performance, the winner for high-volume environments is almost always the ASIC. While FPGAs offer great flexibility for prototyping, they carry a “flexibility tax” in terms of power consumption and physical footprint. ASICs strip away everything unnecessary, allowing you to squeeze more streams out of every rack unit. If your goal is aggressive cost-per-stream optimization, moving away from general-purpose silicon isn’t just a technical upgrade—it’s a financial necessity for staying competitive.

Hevc Hardware Acceleration Efficiency vs Legacy Systems

When you look at the shift from legacy CPU-based setups to modern HEVC hardware acceleration efficiency, the difference isn’t just incremental—it’s massive. Older systems try to brute-force high-efficiency video coding through general-purpose processors, which is like trying to win a Formula 1 race in a minivan. You might eventually cross the finish line, but you’re burning an absurd amount of fuel (or in this case, electricity and rack space) to get there. By offloading these heavy mathematical lifting tasks to specialized silicon, you stop fighting the architecture and start working with it.

This is where the real magic happens for anyone managing a large-scale data center video infrastructure. While FPGAs offer some flexibility, when you’re looking for pure, unadulterated throughput, the comparison of FPGA vs ASIC transcoding performance almost always tilts toward the ASIC for high-density environments. You aren’t just getting faster encodes; you’re achieving a level of cost-per-stream optimization that makes legacy software-only stacks look like a massive liability on your balance sheet.

5 Pro Tips for Navigating the ASIC Transition

- Don’t chase every codec immediately. ASICs are specialized by design, so if your workflow is 90% H.264 and 10% AV1, don’t drop a fortune on an AV1-only chip that’ll sit idle most of the day.

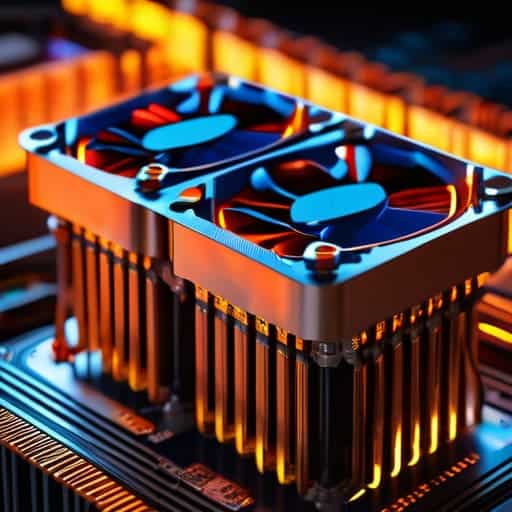

- Watch your thermal headroom. Because these chips pack so much density into a small area, they can run hot during sustained heavy loads. If you’re building a custom rack, don’t skimp on the airflow just because the silicon is “efficient.”

- Mind the “Black Box” problem. Unlike CPUs where you can tweak every little software parameter, ASICs often have fixed-function logic. You gain massive speed, but you lose the ability to micro-manage every single bit of the encoding granularities.

- Audit your end-to-end pipeline. There is no point in having a lightning-fast ASIC transcoder if your bottleneck is a slow SATA drive or a congested network switch feeding it data. The speed is only as good as your slowest link.

- Factor in the “Silicon Lifecycle.” Hardware evolves fast. When choosing an ASIC-based solution, make sure the vendor has a clear roadmap for the next generation of codecs, or you’ll find yourself holding a very expensive paperweight in two years.

The Bottom Line: Is ASIC Right for You?

Stop burning money on massive CPU clusters; if your workload is heavy on video encoding, specialized ASIC silicon delivers a massive leap in density and power efficiency that general-purpose chips simply can’t touch.

While CPUs offer unmatched flexibility for weird, edge-case codecs, ASICs are the undisputed kings of standardized, high-volume workflows like HEVC and AV1.

The real win isn’t just speed—it’s the math. By offloading the heavy lifting to dedicated hardware, you slash your operational costs and thermal footprint, making your infrastructure actually scalable.

## The Bottom Line on Silicon

“At the end of the day, you can keep throwing more CPUs at your transcoding bottleneck, or you can stop fighting physics and just let specialized silicon do the heavy lifting. One is a money pit; the other is a competitive advantage.”

Writer

The Bottom Line on Specialized Silicon

At the end of the day, the shift toward ASIC-based transcoding isn’t just a passing trend; it’s a fundamental pivot in how we handle the massive data loads of the modern web. We’ve seen how traditional CPU-heavy workflows struggle to keep up with the sheer density of HEVC and AV1 streams, often leading to skyrocketing cloud bills and massive latency issues. By offloading these heavy lifting tasks to specialized silicon, you aren’t just gaining a bit of speed—you are achieving a level of operational scalability that general-purpose processors simply cannot touch. It’s about moving away from the “brute force” method of computing and embracing hardware-level precision to get the job done right.

As we look toward a future defined by 8K streaming, VR, and even more demanding video formats, the question for engineers and CTOs isn’t whether to adopt ASIC technology, but how quickly they can integrate it. The era of wasting massive amounts of electricity and compute cycles on inefficient legacy systems is rapidly closing. Embracing specialized hardware is more than a technical upgrade; it is a strategic necessity for anyone serious about building a sustainable, high-performance media pipeline. Don’t get left behind trying to solve tomorrow’s problems with yesterday’s hardware—invest in the silicon that is built to win.

Frequently Asked Questions

Is the upfront cost of specialized ASIC hardware actually worth it for smaller-scale operations?

Here’s the honest truth: if you’re just running a handful of streams, the upfront sticker shock of ASIC hardware might feel overkill. But don’t let the initial invoice fool you. You have to look at the “cost per stream” over time. While a beefy CPU server is cheaper today, the electricity bills and the need to constantly scale up hardware will eat your margins alive. For small teams looking to scale, ASICs are a hedge against future chaos.

How much flexibility am I losing by moving away from software-based CPU transcoding?

Look, I won’t sugarcoat it: you are trading agility for raw horsepower. With CPU transcoding, you can push a new codec or a weird experimental patch via a simple software update. With ASICs, you’re locked into whatever silicon is on the chip. If a new standard like AV1 becomes the industry baseline overnight, your hardware might become an expensive paperweight. You’re choosing specialized speed over the “do-anything” versatility of software.

Can these ASICs keep up with the rapid rollout of new codecs like AV1?

That’s the million-dollar question. The short answer? It’s a gamble. The beauty of ASICs is their sheer, brute-force efficiency, but that’s also their Achilles’ heel. Because the logic is literally etched into the silicon, they can’t “learn” a new codec via a software update. If you bet big on an H.265 chip and AV1 becomes the industry standard overnight, your hardware becomes an expensive paperweight. You’re trading flexibility for raw power.